Month: March 2017

Network failure isn’t an “if” — it’s “when.” This is the motto for proponents of Disaster Recovery as a Service solutions. As the world saw at the end of February 2017, even stalwart cloud providers are not immune to downtime. When Amazon’s S3 web-based storage service went down, websites crashed and connected devices failed as S3 struggled to cope. Even Amazon’s health marker graphics failed to update properly, since those graphics also relied on S3. During the outage they showed “all green,” despite ample evidence to the contrary.

The cause? “High error rates with S3 in US-EAST-1,” which impacted more than 140,000 websites and 120,000 domains. As a clear-cut case for disaster recovery (DR) — despite the failure, Amazon managed to bounce back in less than three hours. For IT professionals, outages like this are often front and center when it comes to convincing C-suites they should invest in reliable disaster recovery (DR). The problem? Sweeping outages caused by natural disasters or massive error rates are the exception, not the rule, leading many executives to wonder if DR is necessary or just another IT ask. Here’s how to craft a convincing argument for solid DR spend.

Unpack the Scope of “Disaster”

What’s the source of sudden, unplanned IT downtime? The answer seems simple — “disasters” — but it’s not quite so straightforward. Mention disaster to the C-suite and you conjure up images of hurricanes, wildfires and massive storms. They take a significant toll: Hurricane Sandy caused huge problems in 2012, flooding data centers and knocking out power for companies across the Eastern Seaboard.

Yet there’s another side to disasters. In Iowa, one small electrical fire caused widespread government server outages, shutting down much-needed services and leading to more than $162 million in payments for affected employees, vendors and citizens. That’s the problem with catchall terms like “disaster” — while it’s easy to conceptualize massive weather issues or technology failures, it’s often smaller-scale events that lead to big problems.

For IT pros, this means conversations with C-suite members about DR investment should always include a discussion of probable causes — walk them through what happens if a water pipe breaks in your data center and leaks onto hardware, or if cleaning staff accidentally pull power cords and your servers go dark. What start as “minor” issues can quickly escalate, even when companies take reasonable precautions.

Making the Case for Disaster Recovery

While providing more detail on disaster potential is usually enough to get C-suite attention, IT pros will likely find this kind of doom-and-gloom discussion won’t lead to DR spending on its own. Part of the problem stems from probability; how likely are the scenarios you describe, and can they be mitigated through more cost-effective means? It makes sense — why invest in disaster recovery if better staff training and preventative building maintenance will do the trick?

Success here demands clear communication about the impact of IT outages. Costs of downtime are a good place to start — midsize companies are looking at more than $50,000 per hour of downtime, while large enterprises could shell out $500,000 or more. Also worth mentioning? More variable costs, such as loss of customers choosing to abandon your brand for a competitor or negative publicity surrounding public outages.

There’s another side to the DR story: The increased revenue of uptime. Since it’s tempting to think of “always on” as the default position for IT service, it’s easy to underestimate the benefits of keeping infrastructure on track. However, staying operational means more than just green lights across the board — access to network and cloud services allow employees to collaborate, customers to engage with front-line staff, and C-suite members to oversee daily operations. Interruption to the order of processes limits your overall efficacy.

Choose Your DRaaS Solution Wisely

Last but not least? Come prepared, and make it clear that you’ve got the knowledge to choose wisely. Here’s why: C-suite executives have budgets to plan and costs to justify, and aren’t interested in handing over resources to IT if managers can’t provide specifics. While there’s no need to transform executives into tech experts, it’s worth doing your homework and coming to the table with at least a few ideas.

First, examine general options for safeguarding your data in the event of a disaster. What you need depends on two things: What you can afford to spend, and what you can afford to lose. For example, synchronous data backups give you second-by-second duplication of critical information, so it’s always accessible even if servers go down. It’s also the most expensive option. Asynchronous solutions cost less but come with delays ranging from seconds to minutes — meaning that if disaster strikes, you lose some of your data. Do the math before the meeting — does the cost of synchronous, active recovery outweigh the potential loss of data, or is this a line-of-business (LoB) necessity?

In addition, consider the type of DR service that best suits business needs. While it’s possible to build something in-house from the ground up, most companies can’t afford this kind of time and investment. Other options include self-service, cloud-based DRaaS and fully managed DRaaS — each offers increasing oversight from third-party providers with an additional cost. Taking the time to research potential providers, monthly costs and service levels helps IT make the case to C-suites in familiar executive terms.

You need disaster recovery, but that doesn’t mean your budget is guaranteed. Want to make an effective pitch to the C-suite? Detail disaster potential, make a compelling case, and come prepared with a plan.

Updated: January 2019

Explore HorizonIQ's

Managed Private Cloud

LEARN MORE

Stay Connected

AWS How-To: Choosing The Right Instance Size And Pricing For EC2

Hyperscale public cloud computing comes with the potential of huge cost savings, but can just as easily get out of hand and result in big spend with no measurable results.

Why the dichotomy of outcomes? It all stems from the ease of deploying public cloud services: With virtually no time and effort, IT pros and front-line staff alike can spin up EC2 instances and start working. While this streamlines the process of accessing resources and applications, it can also lead to issues with “wrong-sized” instances — deployments that are too big for your needs and end up costing more than they save — or problems with the wrong pricing model, leaving you on the hook for services or resources you simply don’t need.

Want to avoid possible problems with AWS sizing and spend? Two options: Schedule a free consultation with an AWS-certified engineer at the link below, or learn the old-fashioned way by reading the following reference guide.

What to evaluate when considering EC2 Instance Types?

Why does the type of cloud instance matter? Since they all fall under the AWS umbrella, aren’t they effectively the same? Not quite. Some offer substantially more memory, a focus on CPU optimization or accelerated GPU performance; while others provide a more generalized set of services and resources.

By selecting a solution based on a specific need rather than generalized cloud expectation, you save time and money while staff will get the best cloud service for their workload. Not sure which of the EC2 instance types is right for you? Let’s take a look:

General Purpose (T2, M4 and M3) — These instance types are designed for small to midsize databases and data processing tasks, for running back-end servers (such as Microsoft SharePoint or SAP), and cluster computing. For example, T2 instances are defined as “burstable performance,” which lets companies burst above the baseline as needed, using high-frequency Xeon processors. M4 and M3 instances are the “next generation” of general purpose and provide a balanced profile of compute, memory, and network resources. The big difference? M3s top out at 30 GiB of RAM while M4s offer up to 256 GiB.

Compute Optimized (C4, C3) — Here, the use case is for front-end fleets, web servers, batch processing, and distributed analytics. They feature the highest-performing processors combined with low price-to-compute performance. C3s support enhanced networking and offer support for clustering, while C4s are EBS-optimized by default and offer the ability to control processor C-state and P-state in the c4.8xlarge instance type.

Memory Optimized (X1, R4, R3) — X1 instances are recommended for in-memory databases like SAP HANA, while R3s offer a lower price per GiB of RAM for high-performance databases. R4s, meanwhile, are ideal for memory-intensive apps and offer even better RAM pricing than R3.

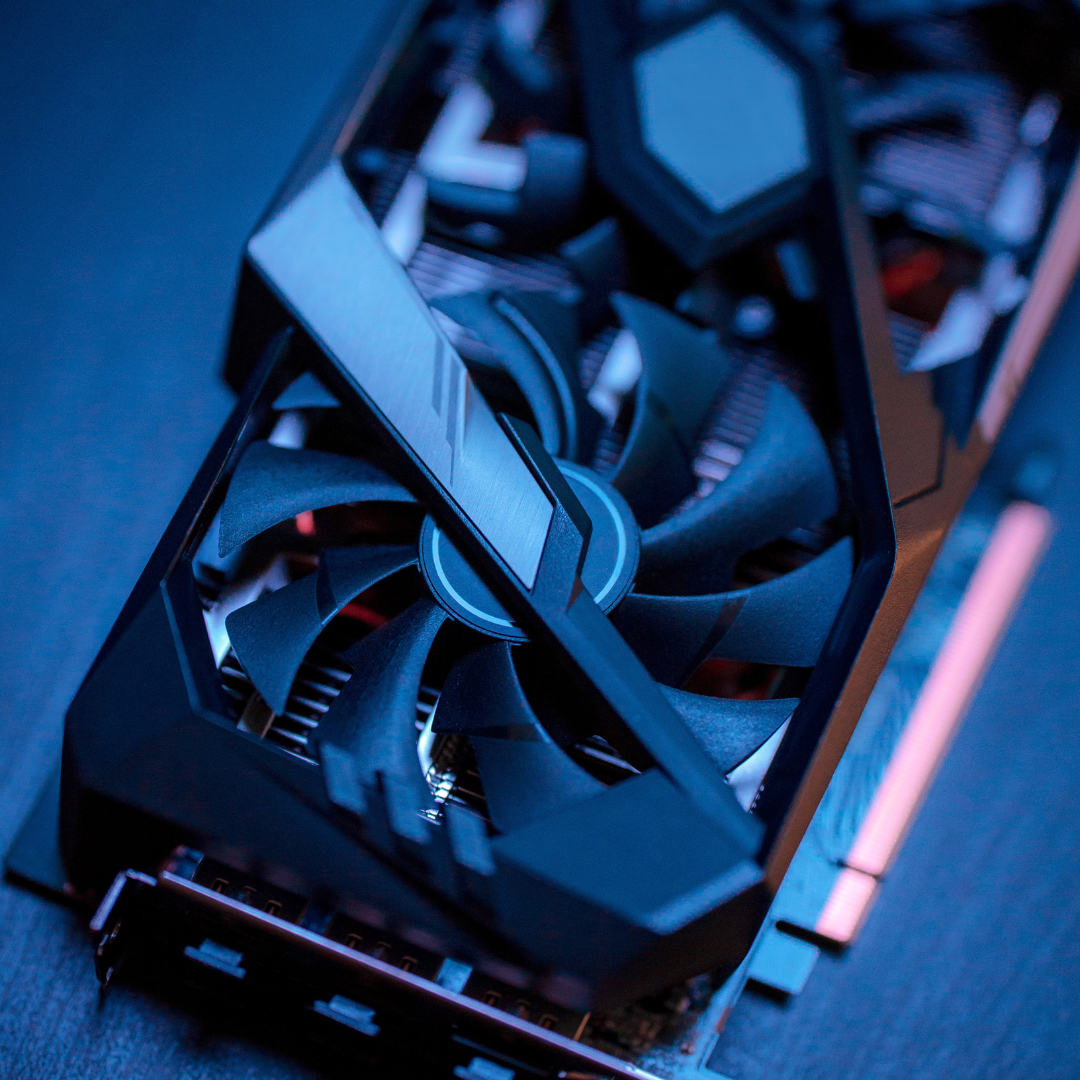

Accelerated Computing Instances (P2, G2, and F1) — Ideal for graphically intensive workloads, P2 instances are designed for most general-purpose GPU apps; while G2s are optimized for GPU-heavy applications; and F1s come with customizable hardware acceleration.

Storage Optimized (I2 and D2) — Running scale-out systems or transactional databases? I2 instances are designed to provide maximum IOPS at a low cost. D2s, meanwhile, are ideal for dense storage of up to 48 TB of HDD-based local storage.

What does it cost to run any of these instances in EC2?

The answer is, “it depends.” It depends on which pricing model you select — choosing the right model for your needs often makes the difference between cloud-as-cost-center and AWS as a bottom-line benefit. There are four basic types of AWS EC2 pricing:

1. On-Demand — The one with which most companies are familiar: You pay for compute capacity by the hour with no long-term contracts or the need for upfront payments. Compute capacity can be increased or decreased as needed. Flexibility is the key benefit here, making on-demand ideal for workloads with fluctuating traffic spikes or app development projects.

2. Spot Instances — In this pricing model, you bid on spare EC2 computing space. The big advantage? You could save up to 90 percent off the on-demand price. The problem? There’s no guarantee — availability varies depending on current server load, making this an ideal fit for apps that make sense in the cloud only with low compute prices or if you suddenly find yourself in need of an immediate and short-term capacity boost.

3. Reserved Instances — Reserved instances allow you to purchase capacity in a specific availability zone; you get the confidence of reserved EC2 computer power at up to 75 percent off the price of on-demand instances. To access reserved instances, however, you must purchase a one- or three-year term payable in full upfront. As a result, this pricing model works best with steady-state applications; cost savings come from consistent use over time.

4. Dedicated Hosts — Here, you purchase a dedicated EC2 server that lets you use existing server-bound software licenses such as Windows Server or SUSE Linux Enterprise Server. Choose hourly on-demand or reservation for up to 70 percent off the on-demand price.

The bottom line when it comes to selecting the ideal mix of EC2 instance type and pricing model? Don’t spend on more than you need; AWS isn’t capacity-limited, meaning it makes more sense to align cloud resources with existing workloads rather than trying to “build in” potential capacity overruns. Need a general-purpose cloud for app development? Opt for M3 and on-demand pricing. Need a more GPU-intensive solution for long-term projects? Consider G2 offerings in a reserved instance.

Spending is easy in the cloud. Leveraging the right resources at the right price point is the hard part.

Explore HorizonIQ's

Managed Private Cloud

LEARN MORE

Stay Connected

It never fails — the moment you plug a server into a publicly accessible network, you become a target.

Ouch, what a downer way to start a blog post, right?

Well, unfortunately, the truth and impact of this statement is one of the most overlooked nuggets of information I could ever think to offer someone managing a server or web application.

Whether you’re a financial firm holding sensitive and nefariously sought after data, or a hobbyist blogging about obscure collectible cheese graters, attempts at intrusions will happen.

Why the inevitability? Brute force login attacks make it extremely easy for hackers to target you.

Before I explain how they work and how to defend yourself, it’s important to address a common (and dangerous) misconception regarding network security. And that’s the idea that there needs to be motive to the attack or intrinsic value in what is attempting to be hacked. While that might be true for someone sitting behind a keyboard manually attempting to guess your password, the vast majority of attempts to break security on a server are automated and not targeted at anything or anyone in particular.

What Are Brute Force Attacks?

Brute force attacks are comparable to what is playfully known as the “infinite monkey theorem,” which posits that given an infinite number of time, even a monkey typing randomly could reproduce Shakespeare.

If a hacker were to set an objective as simple as, “I want to log in to a server, and not particularly any specific server,” they would just need to automate a process that “guesses” passwords on many, many different servers, and quickly.

Conceptualizing the brute force script doesn’t take much creativity either:

TARGET IP address 0.0.0.1 over whatever remote protocol you wish to log in to.

IF the server responds back asking for a username, use “ADMIN,” since that’s statistically pretty common.

IF the server asks for a password, use one from this dictionary file.

REPEAT, move on to the next port/IP until you get in.

Something as lightweight as that could run hundreds of thousands of times per second, against as many IP addresses as the attacking computer’s resources could handle.

Brute force attacks are a “set it and forget it” way of hacking, and unfortunately, they are extremely effective.

Preventing Brute Force Attacks

However, this method of security breach is extremely easy to circumvent. We just have to think like the script. If you’re a managed hosting provider customer, your vendor should already be covering this for you. If you’re on your own, however, here are three tactics to defend yourself against a brute force attack:

1. Reduce the Surface Area

If a bruteforce script relies on the presence of a visible port in order to access your server, don’t give it the time of day! One of the best security tips to follow in any scenario is to reduce the “surface area” your server has over public networks. That means if you can limit access to a port on a firewall, do so. Scope a remote access port to your specific static IP so that no one else can even make login attempts on that port. Don’t have a static IP? Configure a VPN and scope that VPN’s range so that only those users may access sensitive service ports. Anything you can do to limit the network access of these important ports, the better.

2. Don’t Be Predictable

Brute force scripts are crafted based on a game of statistics. If the two components of a successful access attempt are to have both a correct user name, and a correct password, make sure both are not “predictable” or “simple.” For instance, I mentioned earlier that “Admin” was a common user name. It’s a general default username that service developers and hardware manufacturers expect you to change in most cases. Don’t give away half of your security advantage in using a default username convention. Be creative! Instead of “Admin”, try “Admin-<your name here>” or something equally as specific to your usage of that login. On this same note, avoid using common passwords.

Believe it or not, in 2017, there are still commonly used passwords that typically represent a numeric sequence (such as 123456) or an equally predictable word (such as “password” or “password123”). Avoid single, simple words and names, as these are very common in “dictionary files” (I mean, sometimes they’re literally dictionaries.)

3. Add a Step

You can throw a wrench in the brute force works by introducing an additional variable during the login phase. The VPN idea I mentioned earlier is ideal, because in order to get into a properly scoped service port, you’d need to break both the credentials to the VPN connection, and the credentials to the service login you’re attempting to exploit.

Another effective and increasingly popular idea for adding an additional layer of security is “multi-factor authentication.” This option requires users to hit “accept” on a phone app when attempting to log in, or requires them to be dialed by an automated caller and press 1 for verification. In these cases, the malicious 3rd party would need the user’s phone, user name, password, and possibly VPN credentials.

To recap, brute force attacks make it easy to target servers indiscriminately. But they’re also easy to prevent. These sorts of attacks are typically going for “low hanging fruit” so following the above steps for public facing servers will tremendously reduce your risk of compromise.

Explore HorizonIQ's

Managed Private Cloud

LEARN MORE

Stay Connected

Downtime happens.

Recently (February 28th, 2017), the largest cloud provider in the galaxy and possibly the universe—we’re unsure how far alien technologies have progressed—suffered a huge outage that affected a massive number of customers. This isn’t meant to throw stones at AWS (Amazon Web Services); all technologies are at risk of suffering downtime be it at the data center, in your network, through your cloud provider or the physical equipment you utilize. AWS isn’t the first cloud provider to have a failure and I can promise you that they won’t be the last. They’re a good fit for many folks, which is why they’ve become an industry leader.

What this recent catastrophic crash does do, however, is raise the all-too-important point that single points of failure are everywhere. If you’re in charge of making sure your customers or employees have access to your online presence, it’s your job to eliminate them. It’s a tall task, no doubt—but it’s achievable. This is going to be a hot topic for the remainder of 2017 and likely part of many job descriptions moving forward.

Let’s take a look some of the most common portions of Internet infrastructure builds to identify and suggest ways to eliminate these single points of failure.

How to remedy:

- Move static portions of your cloud load to geographically dispersed edge data centers in colocation builds. Clouds are great for flexibility but don’t offer the performance, reliability and significant cost-savings that having your own hardware housed in a premium data center does.

- Use more than one cloud provider. This may sound like an obvious fix, but don’t put all of your eggs in one basket. The physical location of the computers that house these cloud instances has a significant impact on latency and therefore customer experience, so make sure they’re close to where your customers are.

- Ensure that the cloud / hosting instances are housed in data centers that are highly redundant.

- Make sure you’re either currently in or can move to a colocation provider with multiple geographically diverse edge data centers close to one or more of your customers’ physical presence. Make sure to have more than one data center / colocation presence to protect against catastrophic failure.

- If you don’t have an on-site engineer, make sure that the facilities you’re considering offer remote hands service from an actual engineer. If your hardware fails or you need a reboot, not being able to access your failed hardware is the most helpless feeling in the world.

- At a minimum, you want to be connected to more than one Network Service Provider (NSP). Just like all cloud providers go down, all network service providers have issues and can go down. Very few offer Service Level Agreements (SLAs) higher than four nines 99.99%, let alone 100% (shameless plug, we do).

- Alternatively, you can connect to an optimized IP service that ensures your traffic is traveling the best possible route all of the time. These technologies work by optimizing for latency, packet loss and jitter and directing your traffic to the optimal path over a handful of different NSPs. What’s more, if any one of those NSPs have any issues at all, your customers won’t feel it as they’ll be routed around the problem to a different NSP.

- If you’re already multi-homed (connected to more than one NSP) and are running BGP, consider a route optimization appliance that will automatically flip you to the best performing NSP—or away from one which is failing.

- You want to make sure that your hardware is current and has sufficient life left. This is commonly referred to as a “refresh” and depending on the type of servers you’re using and what they’re asked to do, a refresh will likely be required anywhere from every six months to every five years.

- Make sure you have several spare servers housed in your cage or storage area at the data center, just in case you need your on-site engineer or colocation provider’s remote hands service to remedy the situation.