Month: September 2019

There are many considerations to account for when implementing a Microsoft SQL server in a private cloud environment. Today’s SQL dependent applications have different performance and high availability (HA) requirements. In Part 1 of this series, we covered implementation of Microsoft SQL Servers in a private cloud for maximum performance. Here in Part 2, we’ll explore deployment in a private cloud with HA.

HA Options Available Within Microsoft SQL Server

When designing Microsoft SQL servers with HA, we must consider SQL dependent application requirements and features to ensure they work with highly available deployments. Below is a list of a few HA options natively available within the Microsoft SQL server and organized by deployment type.

Physical SQL Server Deployment with Single SAN and SQL Clustering

This HA option is a Microsoft Server native clustering with SQL clustering with a single SAN or direct-attached sub-storage system. In this option, we run two SQL server nodes with a single copy of the database running on a SAN. Only one instance of SQL is active and attached to the databases at any given time.

Pros: This option protects against a single SQL node failure. The surviving node will start its SQL instance and attach to the same databases to continue serving client requests. It offers the best compute performance and good storage performance.

Cons: This option does not protect against database corruption or storage sub-system issues. Both corruption and SAN issues will impact the entire cluster. Licensing costs may be an issue if licensing by CPU.

Virtual SQL Server Deployment with SQL on SAN and VMWare HA

Standard Windows clustering is not recommended in this option. VMWare and other hypervisors will provide a level of HA to protect against hypervisor node failure by restarting the SQL server VM on other nodes.

Pros: This is an easy way to implement HA without having to configure and support windows server clustering. Per CPU SQL licensing costs are reduced. This option offers good computer performance and good storage performance.

Cons: This option does not protect against database corruption or storage sub-system issues. Both problems will cause an outage of the SQL server.

Virtual SQL Server Deployment with SQL on Local SSD Disk and SQL Native AlwaysOn HA

In this option, we utilize two SQL virtual machines running on top of local SSD drives configured in a RAID10 directly on the hypervisor nodes. Each SQL VM is running on separate nodes with local storage. The database, which requires HA, is being protected using SQL AlwaysOn features by maintaining two copies of the database on two separate VMs and two separate, high-speed sub-storage systems.

Pros: This option offers strong HA protection against single SQL Server VM Failure, single hardware node failure and single storage system failure. Automatic database page corruption protection is provided by the AlwaysOn technology. AlwaysOn keeps two separate copies of the database in sync. It offers good compute performance and the best storage performance in a virtual environment. The virtualized SQL server provides savings on per CPU licensing costs by assigning just the amount of CPU that your SQL server needs. Only the active SQL servers instance requires licensing.

Cons: VMWare vMotion should not be used while the SQL VM is turned on. In this design, however, vMotion is not needed since the AlwaysOn protected database server will not need to vMotion during failover. By design, other VMWare HA and resource management services will not be used in this option. Local storage has to be scoped with growth in mind. My rule of thumb is to scope three years of required growth for local storage per server.

Physical SQL Server Deployment with Local SSD Storage and SQL Native AlwaysOn HA

We utilize two physical SQL servers in this option. Each hardware server has SSD RAID10 for local storage. Each SQL server is running on separate bare metal servers. The database, which requires HA, is being protected using SQL AlwaysOn features by maintaining two copies of the database on two separate bare metal servers, and two separate, high-speed sub-storage systems.

Pros: This option provides strong HA protection against single SQL Server failure and single storage system failure. Automatic database page corruption protection is provided by the AlwaysOn technology. AlwaysOn keeps two separate copies of the database in sync. This option offers the best compute performance and best storage performance. Only the active SQL servers instance requires licensing.

Cons: Per CPU licensing costs could get pricy depending on CPU core count. Local storage must be scoped with growth in mind. My rule of thumb is to scope three years of required local storage growth per server.

Closing Thoughts on Microsoft SQL Server HA Options

Compare your workload requirements with the abilities of each option and your budgetary considerations to determine what works best for you. Development or test SQL servers and production workloads can easily run inside virtualized environments with SAN storage. Some of your more demanding production workloads may need to be placed into virtual or physical environments with local SSD-based storage for best performance and HA needs.

Integrating these options into your private cloud environments is simple and can save on costs down the line. When working with local storage, be sure to future proof your disk space growth availability the first time. Future proofing your local storage for growth will save on maintenance headaches and costs in the long run.

In this series, we have looked at basic SQL server concepts and performance factors to be considered when designing Microsoft SQL server deployments for HA and high performance. Download the whitepaper by filling out the form below to get this series in its entirety.

High performance is measured differently for different applications and is greatly dependent on the end user’s expectations as they interact with your supported application. By collecting these simple measurements and requirements up-front, you will be able to make decisions to right size the environment for your end user and to help you stick to your budget.

Read Part 1: How to Implement Microsoft SQL Servers in a Private Cloud for Maximum Performance

Read: The Basics of a Microsoft SQL Server Architecture

Download the full white paper below:

Explore HorizonIQ's

Managed Private Cloud

LEARN MORE

Stay Connected

Boston, Massachusetts is one of America’s oldest cities and the largest city in New England. Beantown is known for many things, including Fenway Park, the Boston Marathon, the American Revolution. And … colocation data centers? Okay, fine—that last item might not make the cut of a Boston Trip Advisor list anytime soon, but the data center market in the nation’s tenth largest metro area is stacked with Tier 3 facilities offering high-performance colocation solutions.

Home to some of the nation’s most prestigious universities, Boston is at the forefront of research and development in a number of growing industries. Software, biotech and health care industries also figure prominently in the area’s economy. When these institutions are looking to cut costs and improve operations by moving their infrastructures off-premise, what is the deciding factor in going with colocation in Boston, rather than cloud in New York or elsewhere? Cloud vs. colocation is a common debate when looking to move off-premise, and choosing to go with either—or both—is a decision to be made based on your applications and IT infrastructure model.

For companies looking to reside in close proximity to their data, needing a top-tier facility and demanding full control and ownership of the infrastructure stack, colocation is and will remain a popular solution. And Boston colocation options meet the needs of the local industries.

Wholesale providers competing in this area are also seeing demand from Big Data analytics and high-performance computing markets, according to a Data Center Frontier.

Are you considering Boston colocation for an off-premise move or need an upgrade over your existing environment? There are several reasons why we’re confident you’ll call INAP your future partner in this market.

INAP’s Presence in Boston and Beyond

We offer two POPs on a fiber ring through the metro area that connect to our Boston flagship. The data center provides a backbone connection to New York, Chicago and Montreal through our private fiber. INAP’s data center is positioned to support the growth in research and development in the city and region.

With INAP, you can expect more from your data center. Designed with Tier 3 compliant attributes, our Boston data center is concurrently maintainable and energy efficient, which is important in markets like Boston. We offer high-density configurations, including cages, cabinets and private suites, which are fully integrated with critical infrastructure monitoring.

Our Boston-area flagship is strategically located 10 minutes from downtown and Logan International Airport, outside of flood plains and seismic zones to help give you extra peace of mind. You’ll also find NOC and onsite engineers 24/7/365, with remote hands available. They’re dedicated to keeping your infrastructure online, secure and always operating at peak efficiency.

At a glance, our Boston Data Center features:

- Power: 6 MW of power capacity, 20+ kW per cabinet

- Space: Over 45,000 square feet of leased space with 28,000 square feet of raised floor

- Facilities: Tier 3 compliant attributes, located outside of flood plain and seismic zones

- Energy Efficient Cooling: 1,600 tons of cooling capacity, N+1 with concurrent maintainability

- Security: 24/7/365 onsite staff, video surveillance, key card and biometric authentication

- Compliance: PCI DSS, HIPAA, SOC 2 Type II and annual independent compliance audits

Download the Boston Data Center spec sheet here [PDF].

INAP’s Private Data Center Suites

Boston is just one of INAP’s 11 locations offering Private Data Center Suites. Our private suites offer custom-built wholesale colocation solutions in Tier 3-design facilities. We support custom deployments of 250kW and up in state-of-the-art data centers equipped with redundant UPS and cooling infrastructure, advanced security features and premium amenities.

These fully customizable colocation solutions provide access to INAP’s high-capacity network backbone and one-of-a-kind, latency-killing Performance IP® solution. Learn more about the INAP’s Private Data Center Suites here.

INAP Interchange for Boston Colocation

Considering Boston for colocation, but not sure where your future will take you? With INAP’s global footprint, which includes more than 600,000 square feet of leasable data center space, you’ll have access to data centers woven together by our high-performance network backbone and route optimization engine, ensuring your environment can connect everywhere, faster.

With INAP Interchange, a spend portability program available to new colocation or cloud customers, you can switch infrastructure solutions—dollar for dollar—part-way through your contract. This will help you avoid environment lock-in and achieve current IT infrastructure goals while providing the flexibility to adapt for whatever comes next.

INAP Colocation, Bare Metal and Private Cloud solutions are eligible for the Interchange program. Chat with us to learn more about these services, and how spend portability can benefit your infrastructure solution.

Explore HorizonIQ's

Managed Private Cloud

LEARN MORE

Stay Connected

How to Implement Microsoft SQL Servers in a Private Cloud for Maximum Performance

There are many considerations to take into account when implementing a Microsoft SQL Server in a private cloud environment. Today’s SQL dependent applications have different performance and high availability (HA) requirements. As a solutions architect, my goal is to provide our customers the best performing, highly available designs while managing budgetary concerns, scalability, supportability and total cost of ownership. Like so many tasks in IT infrastructure strategy, success is all about planning. There are many moving pieces and balancing everything to reach our goal becomes a challenge if we don’t ask the right questions up front.

In this two-part series, I’ll share my approach to scoping, sizing and designing private cloud infrastructure capable of migrating or standing up new Microsoft SQL Server environments. In part one, I’ll identify performance considerations and provide real world examples to make sure that your SQL Server environment is ready to meet application, growth and DR requirements of your organization. In part two, I’ll focus on SQL Server deployment options with high availability.

If you need a review on SQL server basics before we dive into the private cloud design, you can brush up here.

Here’s what we’ll cover in this post, with links if you’d prefer to jump ahead:

- SQL Server performance considerations

- Scoping and sizing for deployments in private cloud

- SQL Server disk layout for performance, HA and DR

SQL Server Performance Considerations: RAM, IOPS, CPU and More

What follows are the basic performance considerations to take into account when designing a Microsoft SQL Server environment.

RAM—These requirements are based on database size and developer recommendations. Ideally, you’ll have enough RAM to put the entire database into RAM. However, that’s not always possible with large DB sizes. RAM is delicious to SQL and the server will eat it up, so be generous if your budget allows. Leave 20 percent of RAM reserved on the server for OS and other services.

IOPS—The SQL Server is mostly a read/write machine, and its performance is dependent on disk IO and storage latency. With SSDs becoming more affordable, many SQL DBAs now prefer to run on SSDs due to high IOPS provided by SSD and NVMe drives. In the past, many 15K SAS disks in RAID10 were the norm for data volumes.

Low latency is essential to SQL performance. By today’s standards, keeping latency below 5ms per IO is the norm. Sub 1ms latency is very common with local SSD storage. However, 10ms is still a good response time for most medium performance applications.

CPU—Bare metal CPU allocation is easy. Your server has a number of CPUs and all of them can be allocated to SQL with no negative considerations, other than licensing costs. However, allocating CPU to a SQL Server in a VMWare private cloud environment should be done with caution. Licensing is based on CPU cores. Too much assigned, but unused, vCPU GHz in a VMWare VM can negatively impact performance. Rightsizing is key.

The SQL Server is not normally a CPU hog. Unexpected and prolonged high CPU usage during production time means something is not right with the database or SQL code. Adding CPU in those cases may not solve the issue. These unexpected conditions should be checked by SQL DBAs and developers. Higher than normal CPU utilization is to be expected during maintenance time. In instances where a database may require high CPU utilization as a norm, a developer or SQL DBA should check the database for missing indexes or other issues before adding more vCPUs to virtual servers.

SQL as a VM

Based on the above considerations, when designing a SQL Server environment, budgetary and licensing considerations may start leaning your design toward SQL as a VM on a private cloud with a restricted number of assigned vCPUs. Running SQL on a private cloud is a great way to save on licensing costs. It’s also a good performer for most application loads, and because SQL is more of an IO-dependent system, its performance will greatly depend on the latency and IO availability of your storage system.

Storage Systems IO Availability

SAN storage will normally provide great amounts of IO based on the amount of disks and disk types on the SAN. The norm to expect from many SAN systems is 5ms to 15ms of latency per IO. When your application desires sub 1ms latency, such as gaming, financial or ad-tech systems, a local SSD RAID10 disk array will save the day. These local disk arrays are easy to set up and use with both VMs and bare metal server implementations. You may ask, “What about the HA and extra redundancy features of using a SAN instead of local disk?” I will be discussing High Availability in a performance-demanding environment in Part 2: Deploying Microsoft SQL Servers in a Private Cloud with High Availability. Check back soon.

In the end, your application’s performance requirements and budget will drive your decision whether to run a private cloud or a bare metal SQL deployment. Both VM and bare metal deployments can be configured with high availability and will easily integrate into your private cloud environments.

With these performance considerations in mind, let’s discuss other scoping and sizing matrices that we’ll need to design our high-performing and future-proofed SQL deployment.

Scoping and Sizing for Microsoft SQL Deployments in a Private Cloud

As a Solution Engineer at INAP, I collect numerous measurements during the SQL scoping process. Before delving into numbers, I start by evaluating pain points clients may have with their current SQL Servers. I want to know what hurts.

Asking these and other questions helps me understand how to resolve issues and future proof your next SQL deployment:

- Is it performing to your expectation?

- Are you meeting your SQL database maintenance time window?

- Do your users complain that their SQL dependent application freezes sometimes for no reason?

- When was the last time you restored a database from your backup and how long did that take?

- What’s your failover plan in case your production database server dies?

- What backup software is being used for SQL?

- Are your databases running in simple or full restore mode?

Once I know the exact problem, or if I’m designing for a new application, I start by collecting specific technical details. The most common specific measurements and requirements collected during the scoping phase are:

- DB sizes for all databases per SQL instance

- Instances (Is there more than one SQL instance, and why?)

- Growth requirements (Usually measured in daily change rate to help with other considerations like backups and replication for DR purposes.)

- RAM requirements & CPU requirements

- Performance requirements, such as latency/IO and IOPS requirements

- HA requirements (Can your customer base wait for you to restore your SQL Server in case of an outage? How long does it take to restore?)

- Regulatory/Compliance requirements such as HIPAA and PCI

- Maintenance schedule (Do the maintenance jobs complete on time every day?)

- Replication requirements

- Reporting requirements (Does this deployment need a reporting server so as to not interrupt production workloads?)

- Backup (What is used today to backup production data? Has it been tested? What are the challenges?)

In environments requiring high performance database response times, we utilize native Windows Server tools, such as perfmon, to collect very detailed performance matrixes from exiting SQL Servers to identify performance bottlenecks and other considerations that help us resolve these issues in current or future database deployments. Because a good SQL Server’s performance is heavily dependent on the disk sub-systems, we will go deeper in disk design recommendations for private clouds, VMs and bare metal deployments.

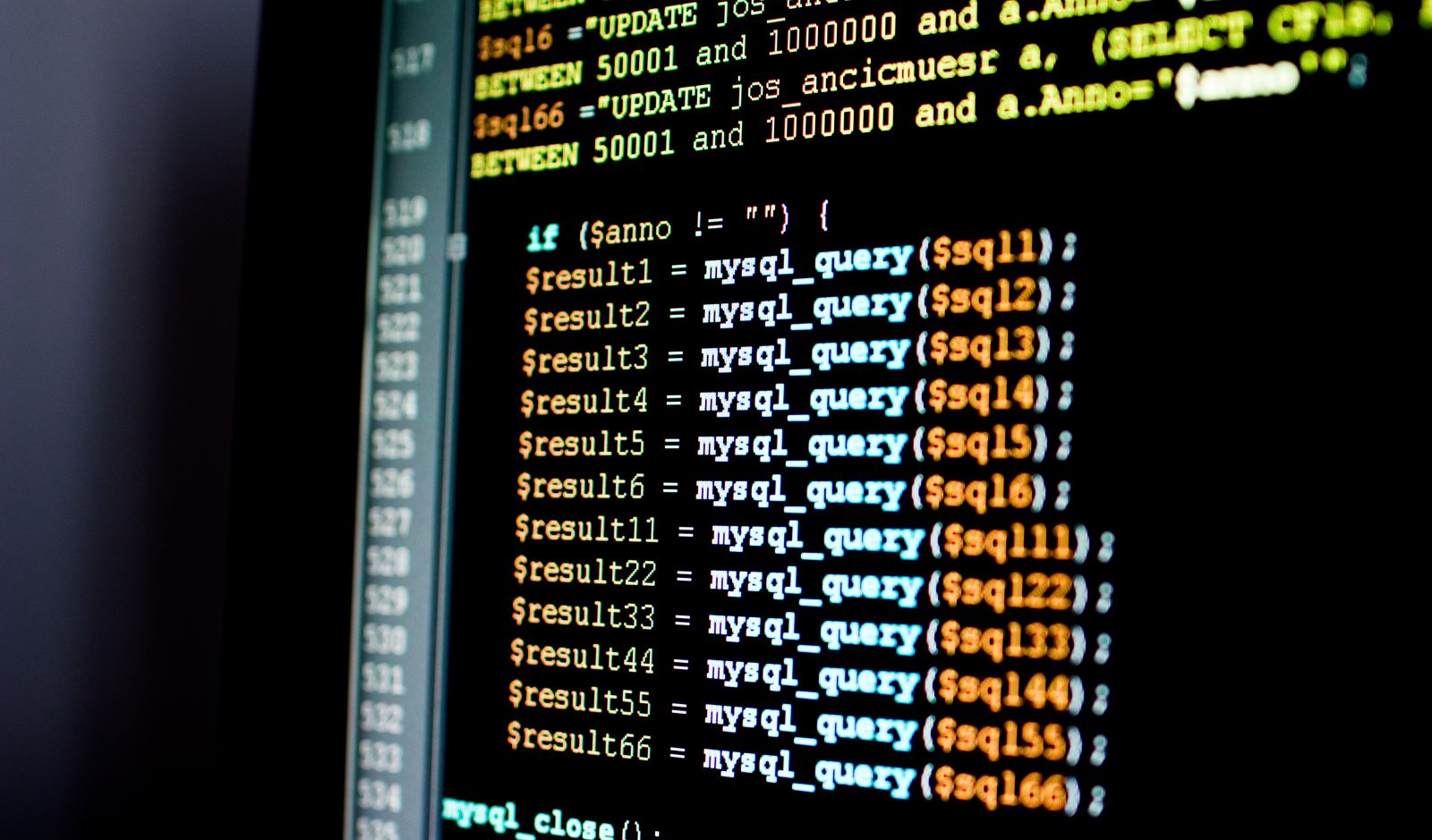

SQL Server Disk Layout for Performance, HA and DR

Separating database files into different disks is a best practice. It helps performance and helps your DBA easily identify performance issues when troubleshooting. For example, you could have a runaway query beating up your TempDB. If your TempDB is on a separate disk from your prod database files, you can easily identify that the TempDB disk is being thrashed and that same TempDB issue is not stepping on other workloads in your environments.

For high performance requirements, SSDs are recommended. The following is a basic disk layout for performance:

- Data (MDF, NDF files): Fast read/write disk, many drives in an array preferred for best performance

- Index (NDF files, not often used): Fast read/write disk, many drives in an array preferred for best performance

- Log (LDF files): Fast write performance, not much reading happens here

- TempDB (Temp database to crunch numbers and formulas): Should be a fast disk. SSD is preferred. Do not combine with other data or log files.

- Page File (NTFS): Keep this on a separate disk LUN or Array if possible. C: drive is not a good place for a page file on a SQL Server.

In a SQL deployment that requires DR, the above disk layout will allow you to granularly replicate just the SQL data and the OS that needs to be replicated, leaving TempDB and pagefile out of the replication design since those files are reset during reboot. TempDB and pagefile also produce lots of noisy IO. Replicating those files will result in unnecessarily heavy bandwidth and disk utilization.

Read Part 2: Deploying Microsoft SQL Servers in a Private Cloud with High Availability

Download the full white paper below: