Month: August 2019

Atlanta, Georgia, is the Southeast’s leading economic hub. It may be the ninth largest metro-area in the U.S., but it ranks in the top five markets for bandwidth access, in the top three for headquarters of Fortune 500 companies and is home to the world’s busiest airport[i]. It should be no surprise that Atlanta is also a popular and growing destination for data centers.

There’s plenty about Atlanta that is attractive to businesses looking for data center space. Atlanta is favorable for its low power costs, low risk for natural disasters and pro-business climate. The major industries in Atlanta include technology, mobility and IoT, bioscience, supply chain and manufacturing.

Low power and high connectivity are strong factors driving Atlanta data center growth in this area. On average, the cost of power is under five cents per kilowatt-hour, a cost even more favorable than the 5.2 cents per kWh in Northern Virginia—the U.S.’s No. 1 data center market. Atlanta is on an Integrated Transmission System (ITS) for power, meaning all providers have access to the same grid, ensuring that transmission is efficient and reliable, according to Georgia Transmission Corp.

Noted in the Newmark Knight Frank, 2Q18—Atlanta Data Center Market Trends report, the City of Atlanta is invested in becoming a sustainable smart city. Plans include a Smart City Command Center at Georgia Tech’s High Performance Computing Center and a partnership with Google Fiber.

Economic incentives also aid the Atlanta data center market growth. According to Bisnow, legislators in Georgia passed House Bill 696, giving “data center operators a sales and use tax break on the expensive equipment installed in their facilities.” This incentive extends to 2028 and providers have taken notice. 451 Research noted that if all the providers who have declared plans to build follow through, Atlanta will overtake Dallas for the No. 3 spot for data center growth.

Considering Atlanta for a colocation, network or cloud solution? There are several reasons why we’re confident you’ll call INAP your future partner in this growing market.

The INAP Atlanta Data Center Difference

With a choice of providers in this thriving metro, why choose INAP?

Our downtown flagship Atlanta data center offers a reliable, high-performing backbone connection to Washington, D.C. and Dallas through our private fiber. INAP customers in this Atlanta data center avoid single points of failure with high-capacity metro network rings. Metro Connect provides multiple points of egress for traffic and is Performance IP® enabled. The metro rings are built on dark fiber and use state-of-the-art networking gear from Ciena.

The flagship Atlanta data center offers cages, cabinets and private suites, all housed under facilities designed with Tier 3 compliant attributes. For customers looking to manage large-scale deployments, Atlanta is a great fit—our private colocation suites give the flexibility they need. And for customers looking to reduce their footprint, our high-density Atlanta data centers allow them to fit more gear into a smaller space.

To find a best-fit configuration within the Atlanta data center market, customers can work with INAP’s expert support technicians to adopt the optimal architecture specific to the needs of their applications. Engineers are onsite in the Atlanta data center and are dedicated to keeping your infrastructure online, secure and always operating at peak efficiency.

INAP’s extensive product portfolio supports businesses and allows customers to customize their infrastructure environments.

At a glance, our Atlanta data center features:

- Power: 10 MW of power capacity, 20+ kW per rack

- Space: Over 120,000 square feet of Atlanta data center leased space with 45,000 square feet of raised floor

- Facilities: Tier 3 compliant attributes, located outside of flood plain and seismic zones

- Security: 24/7/365 onsite staff, video surveillance, key card and biometric authentication

- Network: INAP Performance IP® mix, carrier-neutral connectivity, geographic redundancy

- Compliance: PCI DSS, HIPAA, SOC 2 Type II

Download the Atlanta Data Center spec sheet here [PDF].

INAP’s Content Delivery Network Performs with 18 Edge Locations

Atlanta is one of INAPs’ 18 high-capacity CDN edge locations. Our Content Delivery Network substantially improves end users’ online experience for our customers, regardless of their distance from the content’s origin server. We also have 90+ global POPs to support this network.

Content is delivered along the lowest-latency path—from the origin sever, to the edge, to the user—using INAP’s proven and patented route-optimization technology. We also use GeoDNS technology, which identifies user longitude-latitude and directs requests to the nearest INAP CDN cache, for seamless delivery.

INAP’s CDN also gives customers control of all aspects of content caching at their CDN edges—automated purges, cache warming, cache bypass, connection limits, request disabling, URL token stripping and much more.

Learn more about the INAP’s CDN here.

Our Atlanta data centers are conveniently located in the Atlanta metro area:

- Flagship Downtown Atlanta Data Center

250 Williams Street NW

Atlanta, GA 30303 - Atlanta POP

1033 Jefferson Street NW

Atlanta, GA 30318 - Atlanta POP

56 Marietta Street NW

Atlanta, GA 30303

More About Our Flagship Atlanta Data Center

Our flagship Atlanta data center is located at 250 Williams Street NW, Atlanta, GA 30303. This Atlanta colocation data center connects to Dallas and Washington, D.C. data centers via our reliable, high-performing backbone. Our carrier-neutral, SOC 2 Type II Atlanta data center market facilities are concurrently maintainable, energy efficient and support high-power density environments of 20+ kW per rack. If you need colocation Atlanta services, or other data center solutions in the Atlanta metro area, contact us.

[i] Newmark Knight Frank, 2Q18 – Atlanta Data Center Market Trends

Explore HorizonIQ

Bare Metal

LEARN MORE

Stay Connected

Save with INAP Phoenix Data Centers and Arizona Tax Exemptions

With its low risk of natural disasters, low energy costs and business-friendly policies, Phoenix, Arizona, has emerged as one of the fastest-growing data center markets in the U.S.

The boom is fueled in-part by the state of Arizona’s aggressive tax incentives available through its Computer Data Center Program, which encourages Arizona data center operation and expansion. Administered by the Arizona Commerce Authority and Arizona Department of Revenue, the program offers Transaction Privilege Tax (TPT) and Use Tax exemptions at the state, county and local levels on purchases of qualified computer data center equipment delivered to and installed at a qualified facility.

For large-scale new deployments, this equates to millions in potential savings.

INAP is pleased to share that we’ve qualified for the 20-year tax exemption, meaning that new Arizona colocation customers can take advantage of these benefits at our flagship Phoenix data center.

Secure, stable, affordable and providing high connectivity, INAP’s Phoenix data centers are the perfect home for a disaster recovery environment. Our flagship Phoenix data center is located at 2500 W Frye Road, Chandler, Arizona 85224. We also offer an array of Phoenix data centers throughout the capital. To learn more about our data centers in Arizona visit our Phoenix data centers page.

Chat Now or download our Phoenix data center Info Brief to learn more about how you can benefit.

Explore HorizonIQ

Bare Metal

LEARN MORE

Stay Connected

How One INAP Customer Is Disrupting Desktop as Service Solutions for the SMB Market

Remote work demands efficient and always-on desktop as a service (DaaS) solutions that allow employees to work and collaborate at any time, from anywhere, on any device.

Because an increasing number of businesses are going completely or mostly remote and many others have a mix of full-time remote and in-office workers, providing a seamless and secure experience for employees across the board is no easy feat for IT teams.

DaaS solutions come from a third-party provider, companies do not have control of the backend infrastructure and must rely on the provider—ideally one with a robust SLA—for reliable and scalable service. Furthermore, traditional DaaS solutions take weeks to set up, with hours of planning and configuring before the client can put it to use.

To both meet growing demand and address the shortcomings of current remote desktop products, Denis Zhirovetskiy, president and founder of Adeptcore, a managed IT service provider (MSP) and INAP customer, created his own remote DaaS solution packaged for MSPs. In its first year, the product took off, boasting strong adoption and organic growth.

We sat down with Zhirovetskiy to learn more about Adeptcore, the Adeptcloud service and how the partnership with INAP has helped the company grow and scale from the very beginning.

Addressing SMB Remote Worker Needs

Adeptcore was founded with the purpose of helping a small- or medium-sized business (SMB) use their current technology and make it better, setting themselves apart from companies that sell pre-packaged, per-user or per-device plans.

“We heavily focus on onboarding clients. We can spend three months onboarding one client,” Zhirovetskiy said. “We invest that time because we want to know what each of our clients does for a living and how they’re generating revenue. From there, we tailor the technology to support that mission.”

In line with this purpose, Adeptcore launched Adeptcloud in June of 2018, after a peer in the MSP community requested to use Zhirovetskiy’s proprietary DaaS solution for a client. The MSP was surprised that Adeptcore was not using industry leaders in the market for DaaS solutions and wanted to see why they created their own. Zhirovetskiy explained that his key selling point was that his solution ran on Nimble SAN with SSD cache, ensuring greater performance and reliability. Other providers typically used spinning disks.

Zhirovetskiy realized the product would work for MSPs at large. To scale the product, he worked with Ray Orsini, owner of OITVOIP and one of the first Adeptcloud partners. This collaboration ensured that Adeptcloud worked with VoIP softphone technology. As he worked with Orsini, Zhirovetskiy discovered that he was also an INAP customer, and noted that this helped him certify that their products would work together.

“I started talking with other managed IT providers on an internet forum, and when they saw our solution, they immediately wanted to do something similar for their clients,” he said. “That’s honestly all the marketing I’ve ever done for it.”

From there, the product took off. During the first 12 months of business, from June 2018 to June 2019, Adeptcloud grew to have 65 partners across the U.S. and three internationally. Adeptcloud has users log on daily from Peru and New Zealand, and has clients working from India, Dubai and China.

Why Adeptcloud Stands Out

What exactly helped Adeptcloud take off so quickly? What sets it apart from other DaaS products?

Saving Time

First, Adeptcloud saves MSPs time. “We offer a ready-to-go solution in a box. All they have to do is fill out a form and within three days their customers are able to login and begin working. They don’t have to worry about it,” Zhirovetskiy said.

The Adeptcloud solution has been shown to reduce MSP ticket volumes by as much as 40 percent once the solution is deployed and user training is complete. Zhirovetskiy notes that his team generally goes two to three months without tickets from clients after the first month of implementation.

Ensuring Unified Threat Management

Proactive mitigation of ever-evolving security threats is another benefit that sets Adeptcloud apart. They’ve partnered with a number of top security technology companies to develop an environment where customers can store their sensitive client data without a worry of it being lost or encrypted due to a ransomware attack.

Zhirovetskiy also just added top-of-the-line firewalls and threat management services to his INAP solution, noting that Adeptcore will be the only provider he knows of to offer fully unified threat management functionality to clients.

“We work with holistic, two-factor authentication security solutions and deploy those solutions for our partners. They don’t have to do any of it. They just tell us what they want and we build it out and release it to them,” Zhirovetskiy said.

Focusing on End User and Partner Experience

Ultimately, it is Adeptcore’s focus on the end user that makes Adeptcloud work as a successful cloud solution.

“Most companies that get into the business offering a cloud service are focusing strictly on the tech itself,” Zhirovetskiy said. “They don’t focus on the end-user support, they don’t focus on the client experience and they don’t focus on supporting their partners. They focus on selling cloud.”

Partners can get the support they need with Adeptcloud. “When a MSP partner calls our support desk, they talk to someone who is on the same technical level. This is huge for IT companies—they spend less time dealing with the bureaucracy created by huge organizations.”

Scaling Adeptcloud with INAP

Zhirovetskiy has been working with INAP since the founding of Adeptcore, when the company started with one client and one server. Adeptcore wanted a data center company located in Chicago, and chose INAP for its security and service reliability.

“It’s hard to find a global company like INAP that feels like a local company,” he said. “I have a dedicated team and somebody to call who will take care of my needs. That’s the biggest reason I would recommend anybody work with INAP.”

Throughout the relationship with INAP, Zhirovetskiy has worked with his account manager, Steven Anderson, and INAP engineers to scale the Adeptcloud solution. He says that he talks to Anderson on a weekly basis to discuss the future of his solution: “As our platform has evolved, we always know we have experts available to assist us with our growth.”

Adeptcore uses INAP engineers for full-spectrum infrastructure solutions, from developing backup services using Veeam to designing Adeptcore’s networking infrastructure. The relationship continues to evolve as Adeptcore grows its cloud footprint and expands to other INAP data centers beyond Chicago.

“INAP support has been instrumental in helping us achieve our goals. From managing downtime to planning our next big thing,” Zhirovetskiy said. “We rely on INAP to provide us infrastructure expertise, while we provide expertise to our clients on what we’re good at—delivering them their desktops every single day.”

Explore HorizonIQ

Bare Metal

LEARN MORE

Stay Connected

How to Keep your IT Infrastructure Safe from Natural Disasters

Costly natural disasters—think disasters that cost over $1 billion—are occurring with increased frequency. According to the National Oceanic and Atmospheric Administration, there was an average of 6.3 annual billion-dollar events from 1980-2018, yet in the last five years alone, the average doubled to 12.6.

Last year, natural disasters cost the U.S. $91 billion, and there were 30 events in total over 2017 and 2018 with losses exceeding $1 billion.

Whether the event is a hurricane, flood, tornado, or wildfire, businesses can be blindsided when they do happen. And many businesses are woefully unprepared. As many as 50 percent of organizations affected won’t survive these kinds of events, according to IDC’s State of IT Resilience white paper.

Of those businesses that do survive, IDC found that the average cost of downtime is $250,000 per hour across all industries and organizational sizes.

Imagine what would happen if your business takes a direct hit and your data, applications and infrastructure are disabled. We all know that these events are unpredictable, but that doesn’t mean that we can’t do something now to prepare for any eventuality.

Here are a few basic steps you should take to protect your IT infrastructure and keep your business up and running after a natural disaster.

Perform a Self-Evaluation

The first step in protecting your sensitive information is to determine exactly what needs to be safeguarded.

For most companies, the biggest risk is data loss. Determine how many instances of your data exist and where they are located. If your company only performs backs up onsite or even stores data off-site with no additional backup, you need to reevaluate your strategy. Putting all your eggs in one basket makes it easy for your information to be wiped out by natural disasters.

Think About Off-Site Backups in Different Locations

If you do use off-site backups for your information, you’re taking a step in the right direction, but depending on their physical location, your data might not yet be fully protected.

Consider this scenario: Your business is headquartered in San Francisco and you back up your data in nearby Silicon Valley. A massive earthquake strikes the Bay Area (seismologists say California is overdue for the next “big one”), disabling your building as well as the data center where your backup data is located. Depending on the size of the disaster it could take hours, days or even weeks before your data is accessible. Would your company be able to survive this disruption?

A smarter option would be to select a backup site that’s not in the same geographic region, reducing the chances that both locations would be impacted by the same disaster.

Consider the Cloud

An option becoming more popular with businesses is to utilize cloud storage as their backup solution. HorizonIQ provides a cost-effective and scalable storage option, providing a flexible and dependable cloud storage solution.

Another dependable and more robust option, Disaster Recovery as a Service (DRaaS) replicates mission-critical data and applications so your business does not suffer any downtime during natural disasters. DRaaS provides an automatic failover to a secondary site should your main environment go down, while allowing your IT teams to monitor and control your replicated infrastructure without your end users knowing anything is wrong.

Think of DRaaS as a facility redundancy in your infrastructure, but rather than running your servers simultaneously from multiple sites, one is just standing by ready to go in case of an emergency.

Don’t Wait Until It’s Too Late

It’s never a bad time to evaluate your disaster recovery strategy. But if you’re waiting for a natural disaster to come barreling toward your city, then you’re waiting too long to establish and activate your backup strategy.

It’s just up to you and your IT team to determine which services are most appropriate for your business needs.

Explore HorizonIQ

Bare Metal

LEARN MORE

Stay Connected

Microsoft SQL Server is one of the market leaders for database technology. It’s a relational database management system that supports a number of applications, including business intelligence, transaction processing and analytics. Microsoft SQL Server is built on SQL, which is a programming language used to manage databases and query data.

SQL Server follows a table structure based on rows, allowing connection of data and functions while maintaining the data’s security and consistency. Checks in the relational model of the server work to ensure that database transactions are processed consistently.

Microsoft SQL Server also allows for simple installation and automatic updates, customization to meet your business needs and simple maintenance of your database. Below, you can get a quick overview of how a SQL Server manages data, and how data is retrieved and modified.

SQL Server Data Management

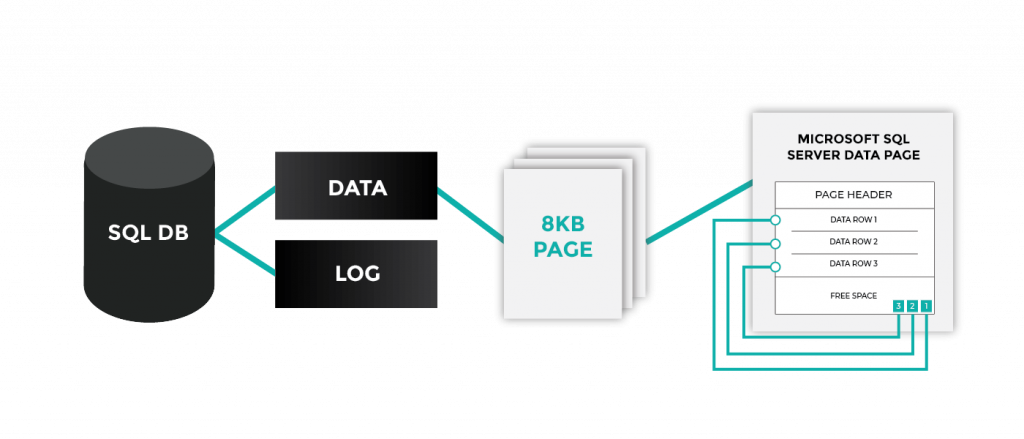

A SQL Database is comprised of one or more data files (.mdf/.ndf) and one transaction log file (.ldf). Data files contain schema and data, and the Log file contains recent changes or adds. Data is organized by pages (like a book), each page is 8KB.

A SQL Server manages this data in three ways:

- Reads

- Writes

- Modifies (Delete, replace, etc.)

Data Retrieval with SQL

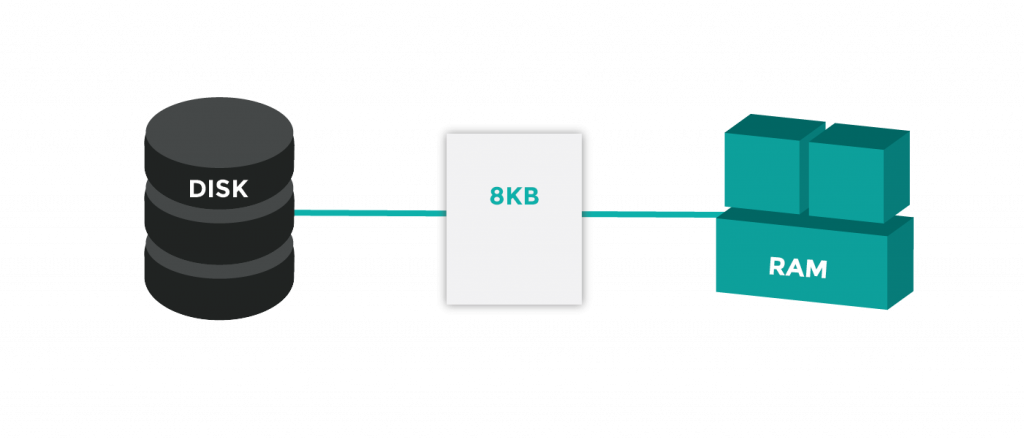

A SQL Server accesses data by pulling down the entire 8KB page from disk into memory. Pages temporarily stay in memory until they are no longer needed. Often, the same page will be modified or frequently read as SQL works with the same data set.

Data Modification with SQL

SQL changes data via delete or modify, or by writing new data. All modifications are written to the transaction log (which sits on disk where it is safe) in case the SQL server loses power before it writes data back to disk.

The 8KB page is written back to disk after it has not been used for a certain time period. Once a transaction is written to disk (.mdf/.ndf file), it is marked as written in the transaction log. In case of a power outage, SQL can retrieve completed transactions that were not written and add them to the database files (.mdf and .ndf) once back in operation.

Taking Steps to Implement a Microsoft SQL Server

Today’s SQL dependent applications have different performance and high availability requirements, meaning there are many factors to consider for implementation. Thinking about implementing a Microsoft SQL Server, or want to make sure that yours is properly meeting your needs? INAP’s solutions architects can help with this process, and your SQL Servers can be hosted and managed on Bare Metal or Private Cloud.

INAP’s latest managed cloud solution, Intelligent Monitoring, supports SQL Servers, monitoring for core application metrics. Get transparency and control over your servers with the support of INAP’s experts.

Explore HorizonIQ

Bare Metal

LEARN MORE

Stay Connected

Bare Metal Cloud: Key Advantages and Critical Use Cases to Gain a Competitive Edge

Cloud environments today are part of the IT infrastructure of most enterprises due to all the benefits they provide, including flexibility, scalability, ease of use and pay-as-you-go consumption and billing.

But not all cloud infrastructure is the same.

In this multicloud world, finding the right fit between a workload and a cloud provider becomes a new challenge. Application components, such as web-based content serving platforms, real-time analytics engines, machine learning clusters and Real-Time Bidding (RTB) engines integrating dozens of partners, all require different features and may call for different providers. Enterprises are looking at application components and IT initiatives on a project by project basis, seeking the right cloud provider for each use case. Easy cloud-to-cloud interconnectivity allows scalable applications to be distributed over infrastructure from multiple providers.

What is Bare Metal Cloud?

Bare Metal cloud is a deployment model that provides unique and valuable advantages, especially compared to the popular virtualized/VM cloud models that are common with hyperscale providers. Let’s explore the benefits of the bare metal cloud model and highlight some use cases where it offers a distinctive edge.

Advantages of the Bare Metal Cloud Model

Both bare metal cloud and the VM-based hyperscale cloud model provide flexibility and scalability. They both allow for DevOps-driven provisioning and the infrastructure-as-code approach. They both help with demand-based capacity management and a pay-as-you-go budget allocation.

But bare metal cloud has unique advantages:

Customizability

Whether you need NVMe storage for high IOPS, a specific GPU model, or a unique RAM-to-CPU ratio or RAID level, bare metal cloud is highly customizable. Your physical server can be built to the unique specifications required by your application.

Dedicated Resources

Bare Metal cloud enables high-performance computing, as no virtualization is used and there is no hypervisor overhead. All the compute cycles and resources are dedicated to the application.

Tuned for Performance

Bare metal hardware can be tuned for performance and features, be it disabling hyperthreading in the CPU or changing BIOS and IPMI configurations. In the 2018 report, Price-Performance Analysis: Bare Metal vs. Cloud Hosting, HorizonIQ Bare Metal was tested against IBM and Amazon AWS cloud offerings. In Hadoop cluster performance testing, HorizonIQ’s cluster completed the workload 6% faster than IBM Cloud’s Bare Metal cluster and 6% faster than AWS’s EC2 offering, and 3% faster than AWS’s EMR offering.

Additional Security on Dedicated Machine Instances

With a bare metal server, security measures, like full end-to-end encryption or Intel’s Trusted Execution and Open Attestation, can be easily integrated.

Full Hardware Control

Bare metal servers allow full control of the hardware environment. This is especially important when integrating SAN storage, specific firewalls and other unique appliances required by your applications.

Cost Predictability

Bare metal server instances are generally bundled with bandwidth. This eliminates the need to worry about bandwidth cost overages, which tend to cause significant variations in cloud consumption costs and are a major concern for many organizations. For example, the Price Performance Analysis report concluded that HorizonIQ’s Bare Metal machine configuration was 32 percent less expensive than the same configuration running on IBM Cloud. The report can be found for download here.

Efficient Compute Resources

Bare metal cloud offers more cost-effective compute resources when compared to the VM-based model for similar compute capacity in terms of cores, memory and storage.

Bare Metal Cloud Workload Application Use Cases

Given these benefits, a bare metal cloud provides a competitive advantage for many applications. Feedback from customers indicates it is critical for some use cases. Here is a long—but not exhaustive—list of use cases:

- High-performance computing, where any overhead should be avoided, and hardware components are selected and tuned for maximum performance: e.g., computing clusters for silicon chip design.

- AdTech and Fintech applications, especially where Real-Time Bidding (RTB) is involved and speedy access to user profiles and assets data is required.

- Real-time analytics/recommendation engine clusters where specific hardware and storage is needed to support the real-time nature of the workloads.

- Gaming applications where performance is needed either for raw compute or 3-D rendering. Hardware is commonly tuned for such applications.

- Workloads where database access time is essential. In such cases, special hardware components are used, or high performance NVMe-based SAN arrays are integrated.

- Security-oriented applications that leverage unique Intel/AMD CPU features: end-to-end encryption including memory, trust execution environments, etc.

- Applications with high outbound bandwidth usage, especially collaboration applications based on real-time communications and webRTC platforms.

- Cases where a dedicated compute environment is needed either by policy, due to business requirements or for compliance.

- Most applications where compute resource usage is steady and continuous, the application is not dependent on PaaS services, the hardware footprint size is considerable, and cost is a limiting concern.

Is Bare Metal Cloud Your Best Fit?

Bare Metal cloud provides many benefits when compared to virtualization-based cloud offerings.

Bare Metal allows for high performance computing with highly customizable hardware resources that can be tuned up for maximum performance. It offers a dedicated compute environment with more control on the resources and more security in a cost-effective way.

Bare metal cloud can be an attractive solution to consider for your next workload or application and it is a choice validated and proven by some of the largest enterprises with mission-critical applications.